In most software, an audit log is a compliance feature. A table of who did what and when, queried rarely, usually only when something goes wrong or a regulator asks. It's engineering infrastructure, not product surface. The typical implementation is a separate `audit_logs` table that logs after-the-fact, often asynchronously, sometimes with the log entry written even if the original action partially failed. The log is an artefact of the action, not an integral part of it.

When you're building software for consulting engagements - work whose value depends on the defensibility of decisions taken months or years later - the audit log can't be an artefact. It has to be the product.

The rule

Every SP decision writes a platform event row *before* the UI updates. If the event write fails, the action does not complete. There is no state in which a decision has been made but the log missed it. The log is not a record of the decision; it is the decision. The database mutation that captures the new state is a downstream consequence of the log entry.

This reads like engineering paranoia. It isn't. It's the product.

Why consulting engagements live or die on defensibility

Three months after a territory design is delivered, a new Regional MD joins. They open the deliverable and ask: why is East Java a separate territory from Surabaya? The existing leadership can't remember precisely. The senior partner who designed it has moved to another engagement. The deck slides show the territory structure but not the reasoning behind the split. The justification is locked in a private chain of emails between the senior partner and the previous RMD, most of which nobody can find.

Six months later, the distributor in East Java underperforms. The Commercial Director asks: why did we pick this distributor over the alternatives we considered? The candidate comparison was done during the engagement, but the notes are in someone's personal files, and the specific reasoning - this distributor's financial strength, that distributor's market coverage - isn't captured anywhere operational.

A year later, a competitor enters the market and a regional restructure is proposed. The RMD asks: what was our confidence level on the market size estimate for Johor when we designed this territory? The answer matters because if the confidence was LOW (the field research hadn't happened yet), the territory's viability verdict was conditional on later upgrades that may or may not have occurred. The answer is unknowable from the deck.

Every one of these scenarios is the engagement's ongoing ROI being eroded by missing memory. The engagement's value depended on its decisions being trusted. Trust depends on defensibility. Defensibility depends on the ability to answer "why did we decide this?" in the same language, with the same specificity, that was available at the moment of the decision.

The audit log, properly constructed, answers every one of those questions. Not because it's a nice-to-have for compliance, but because it's the engagement's working memory.

What a proper event captures

Each platform event captures more than just who did what. It captures:

- **Actor**: which user performed the action, with their role at that moment. - **Timestamp**: exact datetime of the decision. - **Entity**: which engagement entity was affected (territory, distributor, scenario, override). - **Before state**: the state of the entity immediately before the decision. - **After state**: the state immediately after. - **Action type**: a structured enum (TERRITORY_CREATED, DISTRIBUTOR_VERDICT_OVERRIDDEN, CONFIDENCE_UPGRADED, etc.). - **Rationale**: the text the SP provided explaining the decision, in their own words. - **Confidence context**: the confidence levels of key inputs at the time of the decision.

The rationale field is particularly important. It's mandatory for any action that overrides a computed result. "Why are you overriding the AI recommendation?" is a required input, not optional. The SP types their reasoning in their own words, and it's captured verbatim. Three months later, the new RMD asks "why?" and the answer is the SP's actual words on the actual day - not a reconstructed summary, not a deck bullet, not a secondhand recollection.

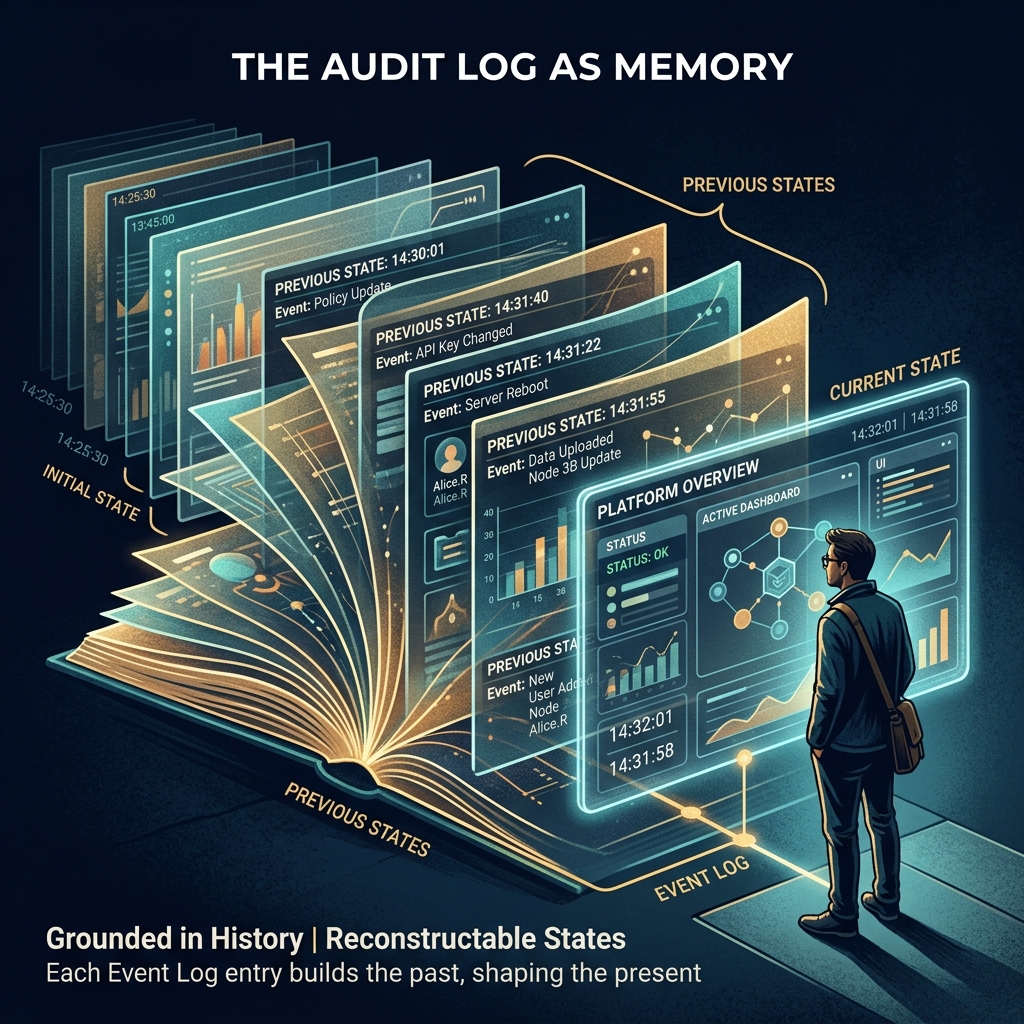

The replay capability

Once the log is comprehensive enough to capture every state change, a surprising superpower emerges: the database can be replayed to any historical state. If the engagement phase was PHASE_2 in March and PHASE_3 in April, you can render the platform as it appeared on any specific date in March or April. Every decision, every override, every confidence upgrade, every scenario comparison - all reconstructable.

This was not a designed feature. It emerged as a consequence of the event-sourcing discipline. But once it exists, it gets used constantly. "What did the territory map look like before the distributor audit findings?" - replay to that date. "When exactly did we downgrade the confidence on the market size estimate?" - query the log. "Show me the state when the CEO approved the territory design" - reconstruct from the events.

Clients use this for internal communications ("here's what the analysis showed at board approval"). SPs use it to debug their own decision processes ("when did we start assuming the cannibalisation rate was 14%?"). New team members use it to onboard ("show me how this engagement evolved"). The replay is more useful than most designed features, because it was free - a consequence of doing the log properly.

The discipline of writing the event first

The engineering discipline that makes this work is writing the event before the mutation, never the other way around. Most systems do it the other way. The UI calls a mutation endpoint. The endpoint updates the database. If the update succeeds, an audit log entry is written. If the log write fails, the action has already happened and the log is incomplete.

The correct pattern inverts this. The endpoint validates the action, writes the platform event inside the same transaction as the mutation, and only then commits both together. If the event write fails, the transaction rolls back and the mutation doesn't happen either. The log and the state change are atomic. There is no window in which the state has changed but the log hasn't.

This is slightly more complex at the code level - the event schema has to be built into every endpoint, the transaction management has to be right - but the guarantee it provides is dramatically stronger. Every state you observe in the database has a corresponding log entry. Every log entry has a corresponding state. The two are never out of sync. The audit log isn't a reflection of reality; it's a definition of it.

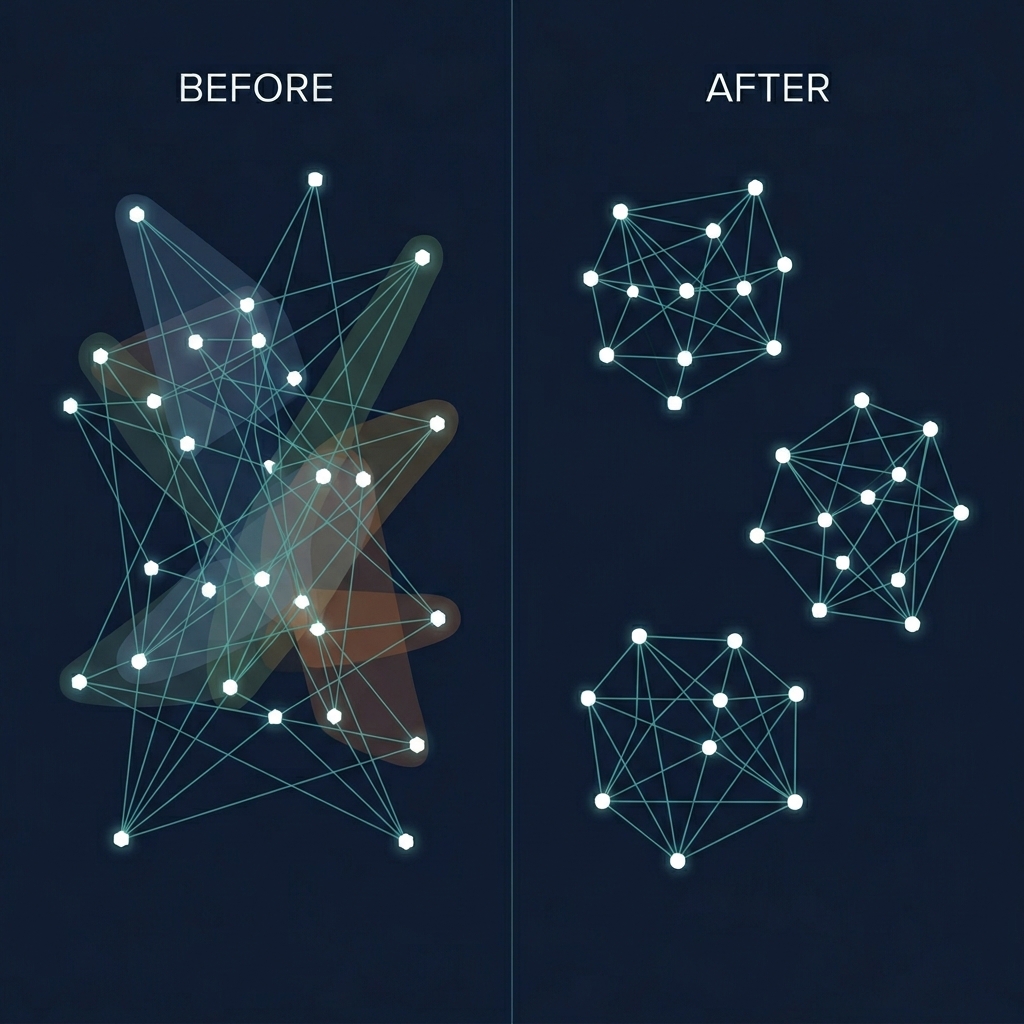

Why most software doesn't do this

Two reasons. First, it's more work. Second, most software isn't used in domains where defensibility matters at the decision level. A CRM can tolerate an imperfect audit log because the cost of a missing "why did the rep change the stage" entry is low. A commercial strategy platform cannot tolerate it, because the cost of a missing "why did we change the distributor verdict" entry is the erosion of the entire engagement's defensibility over time.

Domains where this matters include consulting, legal, medical decision support, financial planning, regulatory compliance - anywhere the decisions taken on the platform will be reviewed, challenged, or relied upon long after they were made. If your platform is in one of these domains and your audit log is a separate table written after the fact, you have a product defect that's invisible today and catastrophic later.

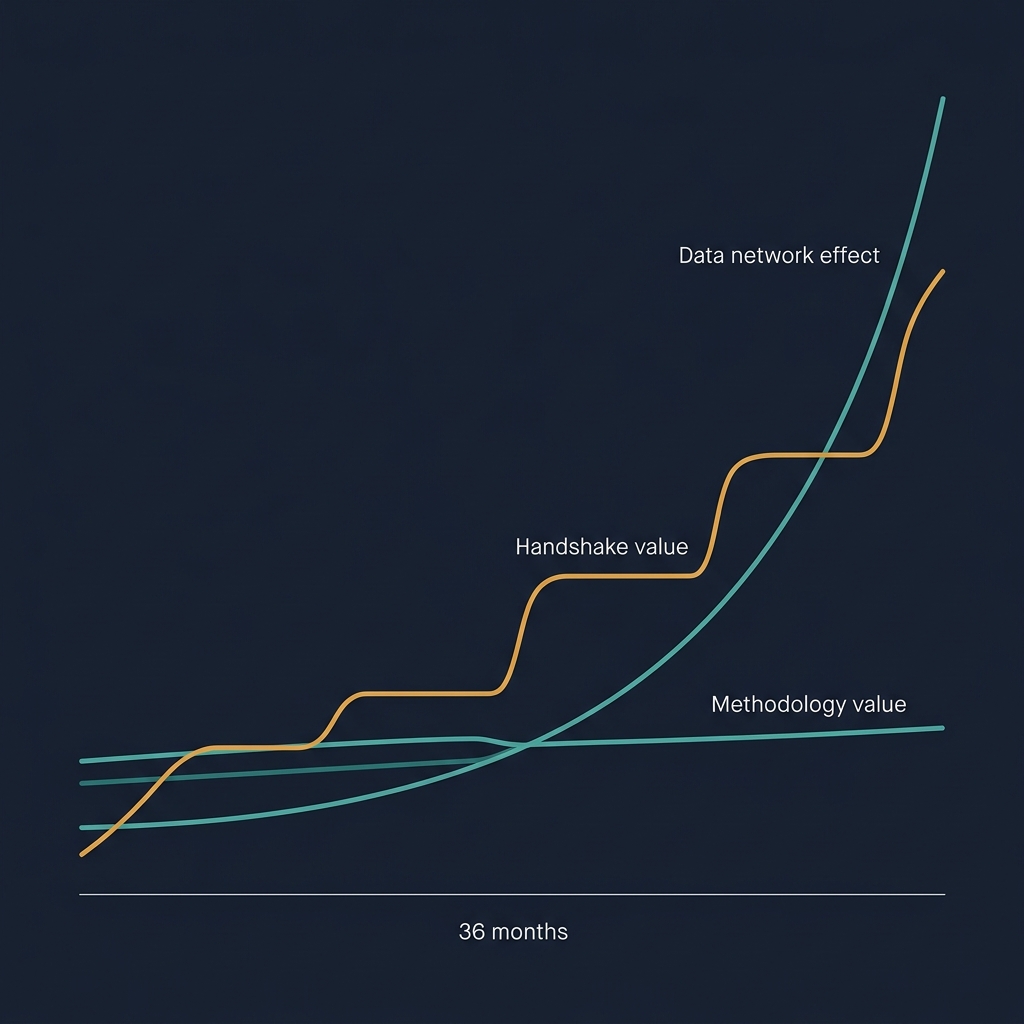

What this means for product positioning

For a commercial strategy platform, the audit log's richness is a defensibility guarantee you can put in a sales pitch. "Every decision made on this platform is captured with the reasoning in the SP's own words, replayable to any point in the engagement's history, queryable for compliance or forensic review." That's not an engineering feature; it's a product differentiator against competitors who treat the audit as infrastructure.

The platform's SP audit screen (the interface for reviewing the engagement's history) is designed to surface this richness. Every action appears in a timeline, filterable by actor, entity, action type, or date range. Clicking an action expands to show before and after states, the SP's rationale, and the confidence context. Exporting the timeline produces a defensible record that stands up to any subsequent review.

Competitors without this spine cannot defend a single decision past the week it was made. When the new RMD asks "why", their platform can only show the current state. Ours can show the decision, the reasoning, the alternatives considered, and the data confidence at the time. That's not just better audit; it's a materially different product.

The meta-principle

The audit log's transformation from compliance feature to product is one example of a broader pattern. Infrastructure that's good enough to be invisible is infrastructure that can become a product surface. The replay capability emerged from event sourcing done properly. The confidence cascade emerged from confidence being a first-class field on every variable. The multi-tenant membership model emerged from recognising that tenant isolation was inadequate for consulting work.

In each case, the "infrastructure" was done to a standard that went beyond what a compliance checkbox would require. Going beyond the checkbox took more engineering time up front. The payoff wasn't in passing the compliance audit; it was in the product capabilities that became possible because the foundation was stronger than it needed to be.

The lesson for any vertical platform: if your infrastructure is only as good as it has to be, your product is only as good as your infrastructure. If your infrastructure is better than it has to be, your product can eventually be better than your competitors' in ways that aren't obvious until the right use case appears. The audit log being the product isn't a clever design trick; it's a consequence of building the foundation with more care than the compliance standard demanded. The same applies to every other dimension of the platform. Build for the future use cases you can't yet see, and trust that they'll show up.