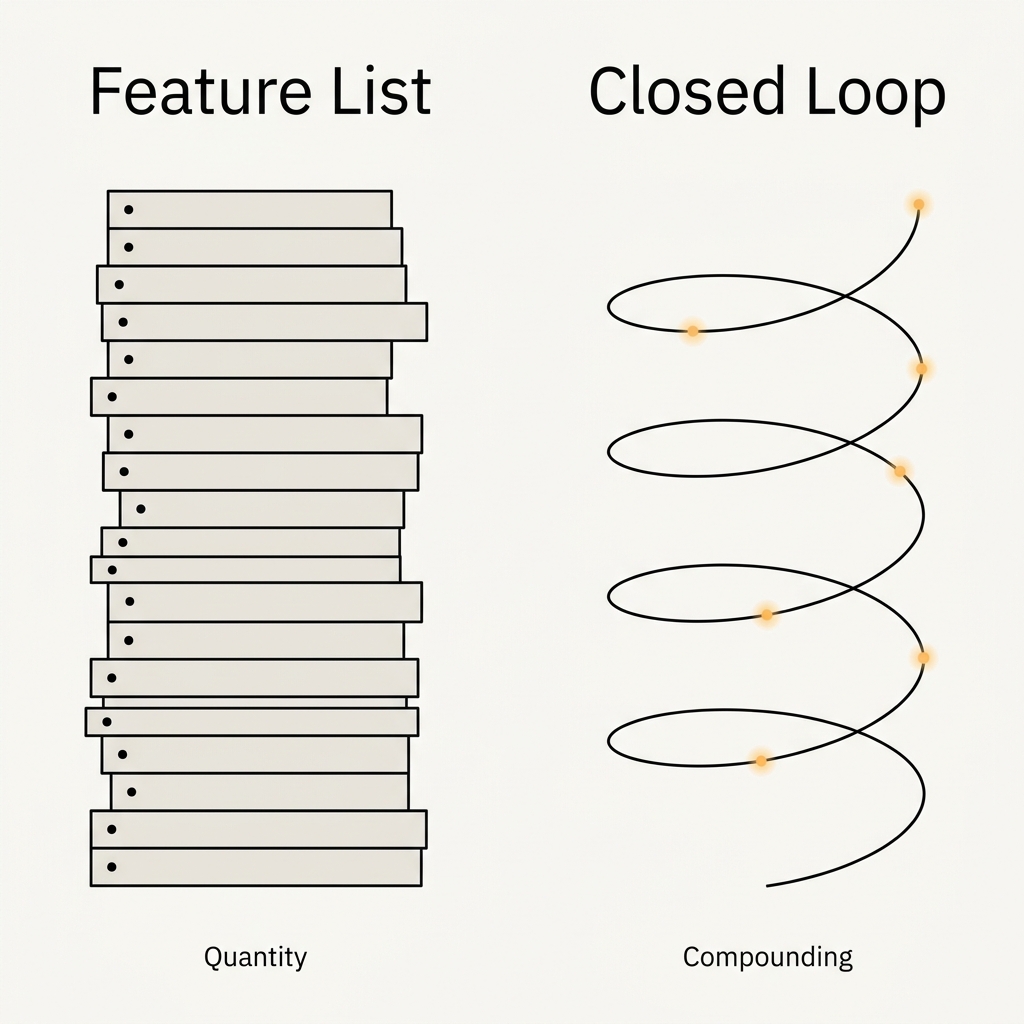

There is a moment in every enterprise software evaluation where the buyer asks the vendor a comparison question. "How is your product different from Salesforce Consumer Goods Cloud?" "Where do you fit relative to Repsly?" "What do you do that SAP doesn't?" The vendor responds with a feature list. Most buyers, having heard hundreds of feature lists across enterprise evaluations, know intuitively that this is the wrong answer - that a feature list competition always favours the incumbent with the larger R&D budget - but they don't always have the language to articulate why. This article is about why, and what the right framing looks like for commercial operating systems specifically.

The feature list trap

The incumbent enterprise platforms in consumer goods - Salesforce CG Cloud, SAP's TPM modules, Microsoft Dynamics - all have enormous feature lists. They cover customer business planning, trade promotion management, retail execution, order management, territory planning, and half a dozen other commercial functions. Their feature lists are longer than any startup competitor's feature list will ever be. If the evaluation reduces to features, the incumbent wins by default.

The incumbent wins at the feature level because features are additive and budgets compound. A platform that has existed for fifteen years with a billion-dollar R&D budget will always have more features than a platform that has existed for two years with a fraction of that budget. This is not a contestable game. The only way to compete effectively is to change what is being evaluated - from a feature list to an architectural fit.

The architectural question

Architecture is different from features in a specific way. Features are things the platform does. Architecture is how the platform is structured. The architectural question for a commercial operating system is: does the platform close the loop between strategy, execution, signal, and intervention, and does it close it in a single cumulative data layer? A feature-rich platform that does not close the loop produces disconnected modules. A platform with fewer features that closes the loop produces commercial intelligence. The difference is qualitative, not quantitative, and it compounds over time in ways feature counts do not.

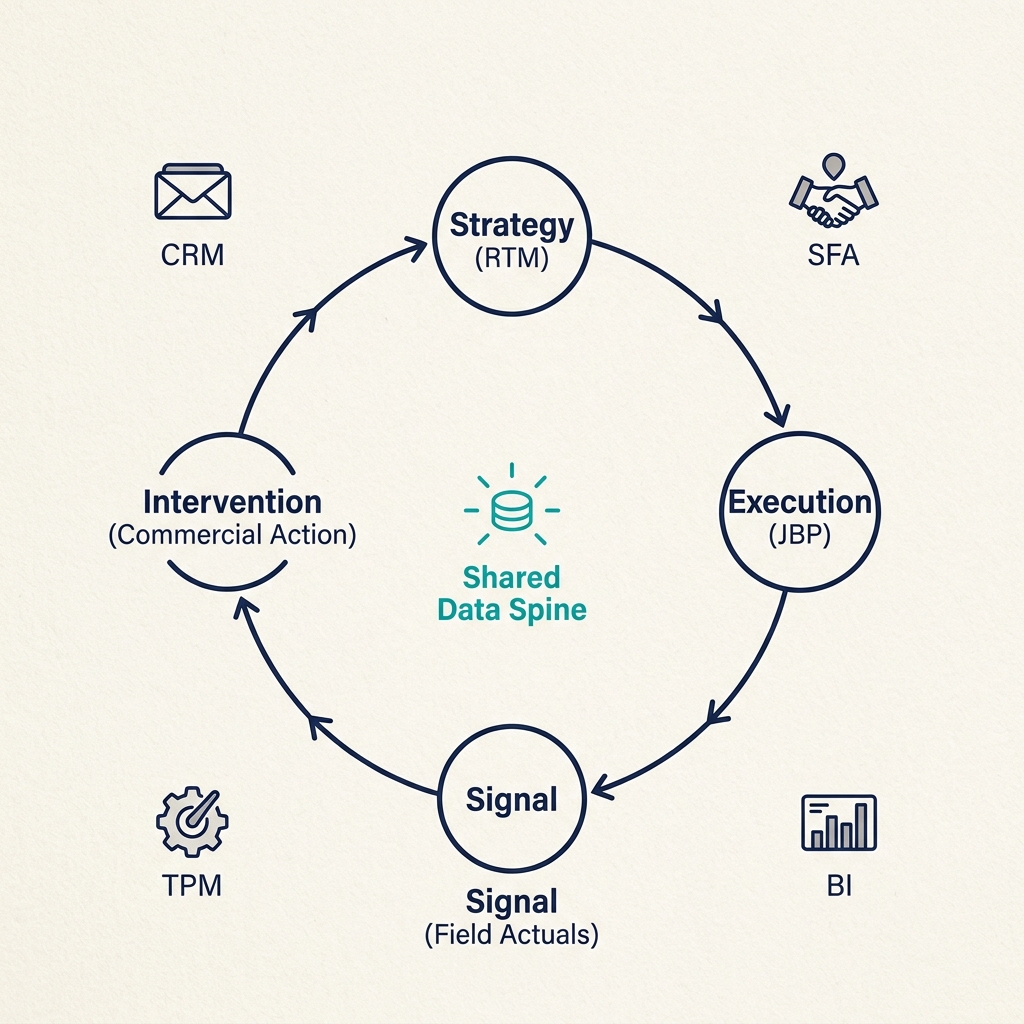

Consider the specific loop required for route-to-market in distribution networks. Strategy originates in an RTM investment thesis - where to play, at what cost, with which distributors, through which channels. This thesis gets translated into commercial commitments - a JBP with revenue, coverage, AOV, and SKU distribution targets, plus the bilateral investment commitments that support them. Those commitments are executed in the field - visits, orders, refusals, photos, voice notes - every day across thousands of outlets. The field execution produces signals - commitment gaps, refusal patterns, competitive observations, stock velocity divergences - that can only be read correctly in combination. The signals should trigger interventions - specific commercial actions, with expected uplift, with one-click activation, with post-hoc measurement of outcome against the prediction. The intervention outcomes feed back into the strategy layer, informing the next RTM thesis update.

This is a closed loop with four nodes and a feedback path. The nodes are interdependent: Module 2's JBP cannot exist without Module 1's territory design; Module 3's DSR targets cannot be set without Module 2's JBP; Module 4's intervention recommendations cannot be generated without Module 3's field actuals. No single existing platform covers all four nodes. CRMs cover strategy translation but not execution ground truth. SFAs cover execution but not strategic intent or intervention generation. BI tools visualise any of the four but originate none of them. Trade promotion management modules cover interventions but are not grounded in structural JBP consistency. Each of these platforms can produce a feature list that sounds comprehensive, and each of them, deployed alone, leaves a gap somewhere in the loop.

What a CRM cannot structurally do

A CRM manages accounts. An account is a record of a customer relationship with a set of contacts, activities, opportunities, and historical transactions. This is useful for a sales team selling directly to end customers. It is insufficient for a distribution network, because a distributor is not a customer in the CRM sense. A distributor is a commercial partner with a bilateral contract, a P&L economics question, a capacity constraint, and a downstream network of outlets that the brand does not directly serve. A CRM cannot model the distributor's ROIC against a hurdle rate. A CRM cannot validate the structural consistency of a JBP. A CRM cannot read four commitment gaps simultaneously to produce a performance signature. These are not features the CRM is missing. They are structural requirements that contradict what a CRM is.

The same is true in reverse for SFAs. A sales force automation tool is architected around the individual sales representative - their visit schedule, their activity logging, their productivity metrics. This is necessary but insufficient for a commercial operating system. An SFA cannot originate strategic intent. An SFA cannot model distributor economics. An SFA cannot generate intervention recommendations. Adding those features would require rebuilding the platform's core, because the existing architecture was not designed to carry them.

A BI tool is yet another category. It visualises data that already exists somewhere else. It does not generate data. It does not originate decisions. It does not close any loop. It is a terminal node - data flows in, dashboards flow out, action happens somewhere else or not at all. The distinction matters because BI tools often get evaluated as if they were operating systems, and they are not. They are reporting layers on top of operating systems, and the operating system they report on determines what can be reported.

The compounding advantage of architectural fit

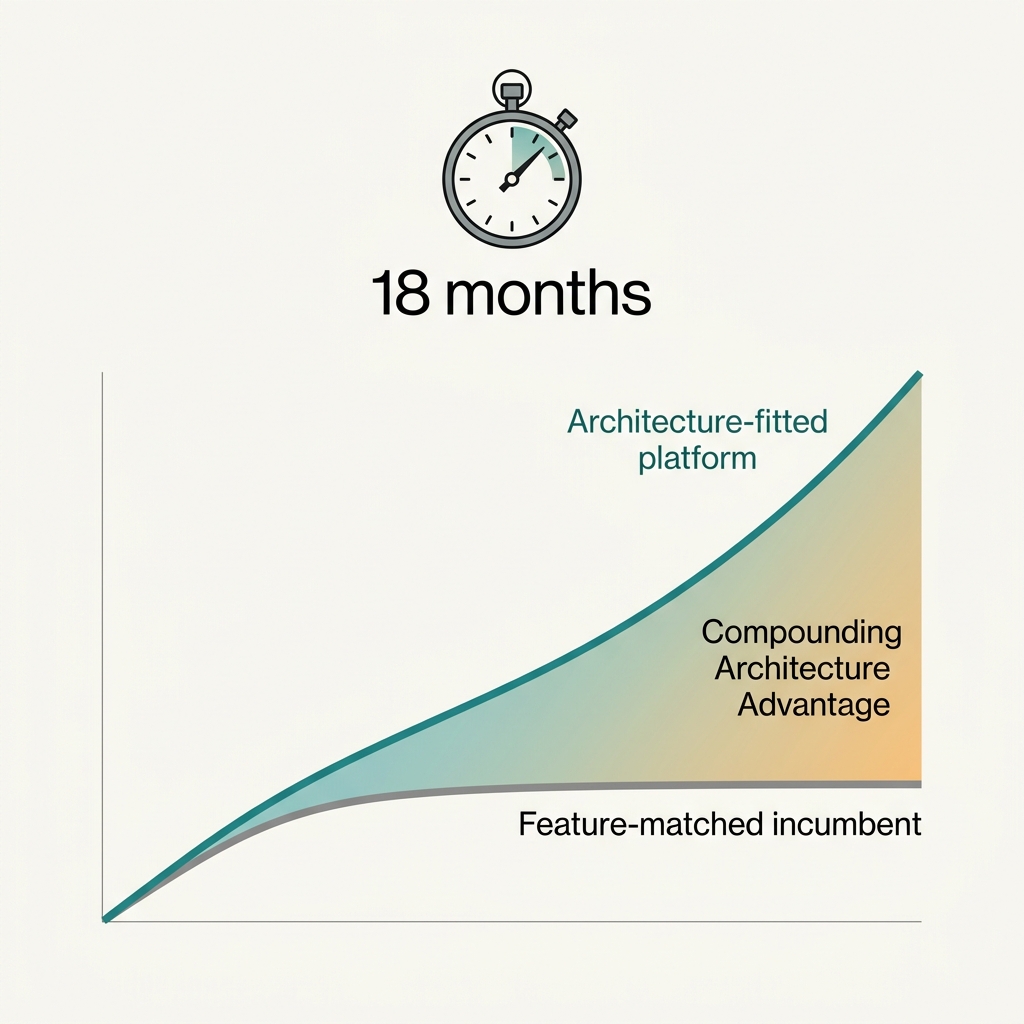

When a platform is architecturally fitted to the commercial problem it is solving, it produces outcomes that feature-rich but architecturally mismatched platforms cannot produce, even when the feature lists overlap. A JBP compliance score that combines field actuals from GPS-verified visits with financial data from the distributor P&L is a different kind of insight than a JBP dashboard that reports pre-computed metrics from a trade promotion management system. The first is diagnostic. The second is descriptive. The first tells you what to do next week. The second tells you what happened last quarter.

The compounding effect shows up eighteen months into a deployment. The feature-matched incumbent has generated a large volume of data, most of which sits in silos - the CRM has the contacts, the SFA has the visits, the BI tool has the dashboards, and the data lake has the residue. The architecture-fitted platform has generated less data but all of it flows through the same spine, and each data point compounds the value of every other data point. The intervention recommendations in month eighteen are qualitatively different from the intervention recommendations in month six, because the underlying intelligence has deepened. The incumbent's system in month eighteen is doing the same things it was doing in month six, because the silos prevent compounding.

Why this is unbreakable by incumbents

The architecture is not a feature gap that an incumbent can close with a product sprint. It is a structural property of how the platform was designed on day one. An incumbent that wants to close the loop would have to rebuild its data model from scratch - reconcile its CRM schema with its SFA schema with its TPM schema with its BI schema - and the political, technical, and commercial cost of doing that inside a product organisation with millions of existing customers is prohibitive. The incumbent's customers have migration costs that would resist the rebuild. The incumbent's product teams have incentive structures that reward feature additions, not architectural reconstructions. The incumbent's sales motion depends on the current feature list, and the rebuild would temporarily reduce the list.

The outcome is that architecture-fitted competitors have a window - typically three to five years - before incumbents either acquire them or build adjacent products that approximate their approach. Within that window, the architecture-fitted competitor can build a customer base, deepen their canonical intelligence layer, and establish category-defining positioning. After that window, the competitive game becomes about scale and execution rather than architecture, but the head start is usually decisive.

The evaluation question worth asking

If you are a Regional MD or a VP Commercial evaluating a commercial operating system, the question to ask is not about features. The question to ask is about the loop. Does this platform produce an RTM investment thesis? Does the thesis translate directly into a signed JBP with structural consistency validation? Do the JBP commitments produce live DSR targets that are measured from ground-truth field actuals? Do the field actuals feed a signal engine that reads combinations, not single metrics? Do the signals produce intervention recommendations with expected uplift and one-click activation? Do the intervention outcomes feed back into the next RTM thesis update?

If the answer is yes to all six, you have a commercial operating system. If the answer is no to any of them, you have a collection of commercial tools. The difference will determine whether your next five years of commercial investment compounds or dissipates. Features fade. Architecture compounds. Choose accordingly.