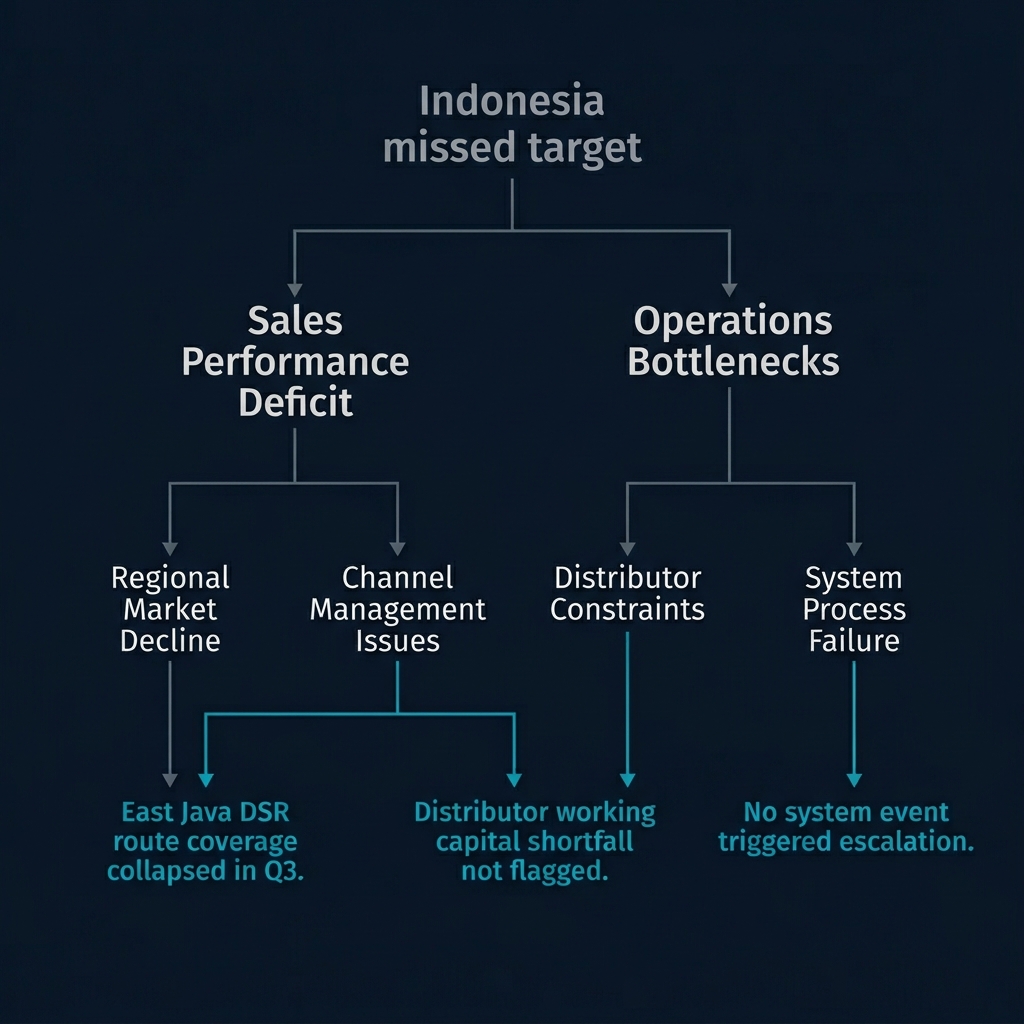

Any framework for assessing distributor quality eventually includes a dimension that captures, in some form, the distributor's respect for the principal relationship. Different frameworks name it differently - "commercial integrity," "ways-of-working compliance," "channel exclusivity," "principal commitment." The underlying concept is the same: is the distributor honoring the commercial agreement with the tenant, or are they hedging, freelancing, or actively competing against the tenant's interests?

Traditional frameworks treat this dimension as a pass/fail gate. The distributor is either honoring the agreement - exclusive category representation, compliance with pricing, no grey market activity - or they are not. If they are not, the relationship is terminal. Replace them. Find someone else.

This framing works for market leaders with established brand pull and meaningful margin contribution. It is a fantasy for challenger brands.

I want to work through why this is, because the error is so common and so consequential.

A market leader operates from a position of leverage. The distributor needs the market leader's product line more than the market leader needs any single distributor. The market leader can credibly say: honor the terms of our agreement, or we'll find someone else. There are other distributors. The leader's volume is strong enough to compensate a new partner for ramp-up costs. The relationship is asymmetric in the leader's favor, and the commercial terms can be correspondingly strict.

A challenger operates from a position of weakness. The distributor has multiple principals competing for their attention, and the challenger - by definition - is not the most established of them. The challenger's volume, at least initially, is a fraction of what the distributor gets from their primary principals. Asking the distributor to drop competing products, commit to full ways-of-working compliance, and treat the challenger as a strategic priority when the financial numbers don't yet support that ask is not negotiation - it's demanding commitment you haven't earned.

The distributor's behavior, in this context, is not a capability failure. It's rational. The distributor is hedging because hedging is economically correct. The challenger brand hasn't yet demonstrated it's worth prioritizing over the established principals. The challenger is, in commercial terms, an attractive younger prospect. The distributor is right to keep their options open until the evidence is clearer.

Treating this as a disqualifying gate - replacing the distributor because they haven't committed to the challenger - makes no sense. The replacement distributor will behave identically, because the challenger's position in the market is what drives the behavior, not the individual distributor's character. You can cycle through distributors indefinitely and the outcome will be the same.

The fix is not to lower the dimension's importance. The fix is to recognize that the dimension's role in the assessment output depends on the tenant's market position. For a market leader, it is reasonably a gate. For a challenger, it is a signal to track over time - something that should improve as the tenant earns credibility - but not a gate in year one.

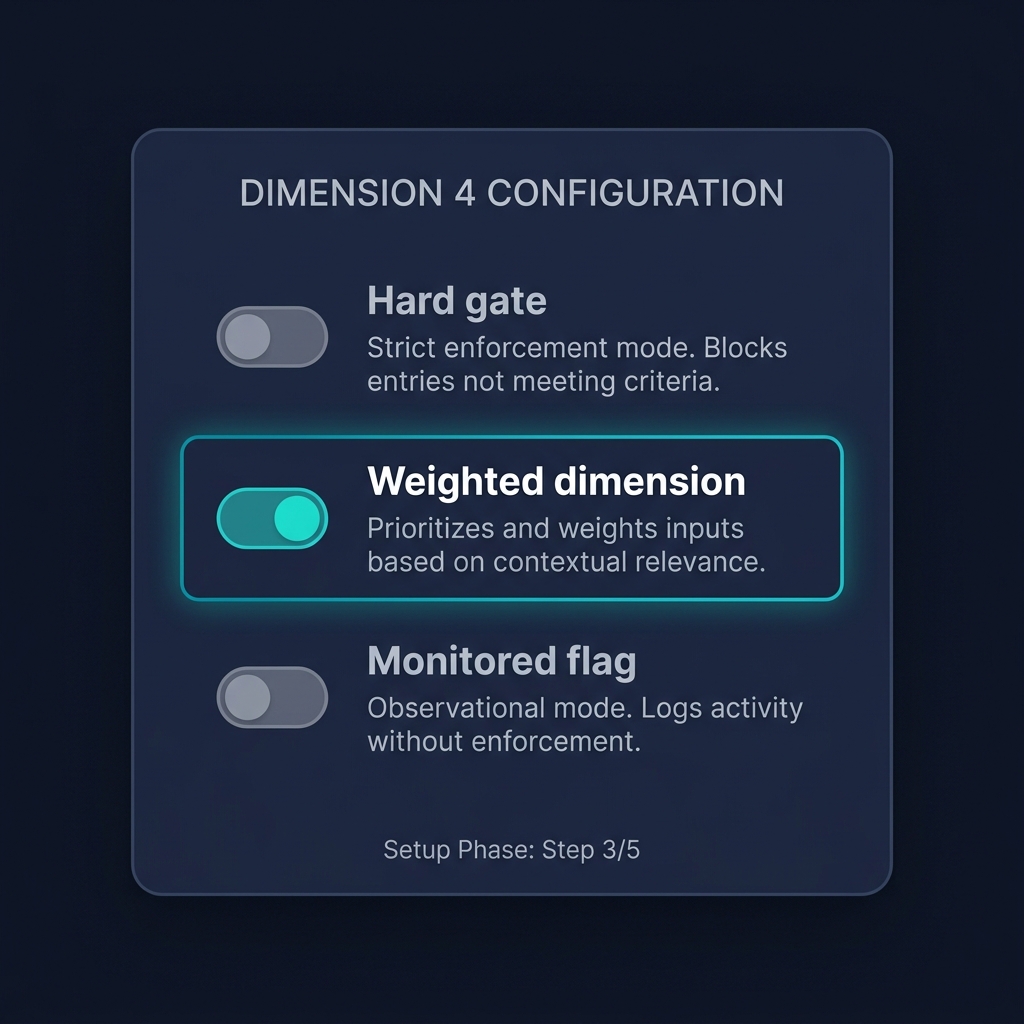

This has a specific product-design implication. The dimension should be configurable, not hardcoded. At tenant setup, the organization chooses how this dimension functions in their distributor assessment.

Option one: hard gate. Any sustained failure on the dimension triggers a Replace/Recruit classification regardless of scores on other dimensions. Appropriate for market leaders whose leverage and volume justify strict commercial terms.

Option two: weighted dimension. The dimension is scored, factored into the composite assessment alongside other dimensions, and contributes to the classification but does not single-handedly determine it. Appropriate for mid-position brands who can impose some commitment but not absolute compliance.

Option three: monitored flag. The dimension is visible and tracked but does not affect the output classification. The tenant knows where the distributor stands on commitment; they don't act on it as a disqualifying signal. Appropriate for challengers whose primary task is building the relationship to the point where commitment becomes earnable.

The tenant chooses at setup, guided by their strategic advisor, based on an honest assessment of their market position. A challenger in a category where they're trying to establish a beachhead will typically set this to "monitored flag." A market leader with brand pull and distributor dependence will typically set this to "hard gate." Most tenants will find themselves somewhere in between.

There is a generalizable principle here about framework design, and it's worth making explicit.

Most assessment frameworks implicitly encode the norms of the archetype the framework designer had in mind. If the designer was thinking about market leaders, exclusivity looks like a gate. If they were thinking about challengers, exclusivity looks like an aspiration. Neither is wrong in its own context. But a framework that bakes in one set of norms cannot serve the other use case without producing wrong answers.

The fix is to separate the framework's analytical logic from its normative application. The analytical logic is: measure the distributor's behavior on this dimension. The normative application is: decide how this measurement translates into a classification. The first is universal. The second is contextual. By separating them and making the normative application a tenant-configurable setting, the framework remains robust across market positions.

This principle extends beyond the specific dimension of principal respect. Almost every assessment dimension has context-dependent norms. A financial capability threshold that's reasonable for a premium category is crushing for a commodity category. A coverage expectation that's reasonable in an urban market is absurd in a rural one. A growth trajectory that's healthy for a mature brand is catastrophic for a launch brand.

Every framework that aspires to work across contexts has to externalize these normative applications as configuration rather than baking them in as rules. It's more work at design time. It's the only way the framework produces trustworthy answers at use time.

One more observation, about the negotiation dynamic the Dimension 4 configurability enables.

When a tenant sets Dimension 4 as a monitored flag, they're making a structural statement about their market position that they may not have made explicitly before. They're saying: we are not yet in a position to demand principal commitment, so we're going to earn it instead. That's an honest statement. It shapes how the commercial team approaches distributor conversations, how they structure incentive programs, how they communicate with distributors about the trajectory of the relationship.

When the same tenant, three years later, moves Dimension 4 to "weighted" and eventually to "hard gate," that's a visible indicator of changed market position. The setting is a kind of strategic pronouncement. It signals to everyone - internal teams, distributors, advisors - that the brand has arrived at a different position and the commercial expectations are updating accordingly.

Most organizations never make this transition explicit. They either hold on to challenger-era informal relationships longer than they should, or they demand market-leader-style compliance before they've earned the leverage to do so. A configurable framework makes the transition visible and deliberate. That's a second-order benefit that's worth as much as the analytical one.

The configurability principle extends to other dimensions of distributor assessment, and the generalization is worth making explicit.

Financial capability, for instance, is usually framed as a threshold - a distributor needs at least X in working capital, Y in warehouse square footage, Z in vehicles. These thresholds are presented as objective, but they embed assumptions about the tenant's ambition level and the territory's scale. A distributor with USD 2M in working capital is inadequate for a tenant planning aggressive growth in a large territory; the same distributor is more than adequate for a tenant operating at steady state in a small one. Baking a single threshold into the framework means it's wrong for most of the contexts it's applied to.

The fix is the same pattern: make the threshold configurable, with the tenant selecting at setup based on their strategic context, and the framework scoring the distributor against the configured threshold rather than against a hardcoded universal standard.

Market knowledge is another dimension where context changes everything. In a category where consumer decisions are heavily influenced by trade recommendations (lubricants, for example, where mechanics often decide for consumers), distributor market knowledge includes deep relationships with trade influencers. In a category where consumer decisions are self-directed (snack foods, for example), distributor market knowledge is more about outlet coverage and shelf discipline. A framework that evaluates market knowledge the same way across categories will systematically misjudge one or the other.

The configurability principle generalizes: any assessment dimension whose relative importance or ideal profile varies by context should be configurable at setup, not hardcoded. The framework provides the structure - what dimensions to measure, how to score them, how to combine them - but the normative weights and thresholds are tenant-specific. This sounds like a lot of flexibility, and it is, but the flexibility is bounded by the framework's structure, so it doesn't collapse into "everyone builds their own framework."

There's a deeper question lurking here about what an assessment framework is for.

Frameworks can serve two different purposes, and the choice between them affects how they should be designed.

Purpose one: classification. The framework tells you whether a distributor is good or bad, viable or not viable, keep or replace. It produces a label that drives an action. For this purpose, frameworks need clear thresholds, binary gates, and unambiguous outputs. The whole point is to enable decisions without extended deliberation.

Purpose two: understanding. The framework helps you see the distributor's position across multiple dimensions, identifies their specific strengths and weaknesses, and supports a conversation about what needs to change. For this purpose, frameworks need nuance, gradation, and explicit acknowledgment of context-dependence. The whole point is to enable deliberation rather than replace it.

Most assessment frameworks try to do both and end up doing neither well. They add gates (classification) but also gradations (understanding), and the result is a hybrid that's too mechanical for real conversation and too soft for confident decisions.

The configurable-framework approach implicitly commits to purpose two. The framework is for understanding. Classification - the Retain/Develop/Replace/Exit output - is produced by the framework plus the tenant's configuration, and the tenant remains accountable for the classification decision. The framework doesn't say "this distributor is a Replace." The framework says "this distributor scores low on Dimension 4, and given your configuration of Dimension 4 as a hard gate, that scoring classifies them as Replace." The accountability stays with the tenant, as it should, because the tenant is the one making the commercial commitment.

This has implications for how the framework is deployed internally.

Frameworks deployed as classification tools tend to get used as rubber stamps. "The framework says we should replace this distributor." The user abdicates judgment to the framework, and when the framework produces a wrong answer (which it will, periodically, because frameworks can't capture everything), the error compounds.

Frameworks deployed as understanding tools tend to get used as conversation anchors. "The framework shows this distributor scoring low on market knowledge and high on financial capability. Let's think about what that means for this specific territory." The user retains judgment, uses the framework to structure the analysis, and makes the classification decision with the framework as input rather than oracle.

The second mode is better, but it requires work upstream. The framework has to be designed for it. The tenant has to configure it for it. The organization has to train people to use it for it. Without those three things, the framework defaults to classification-tool usage regardless of how it was designed, and the abdication problem appears.

One operational detail worth mentioning.

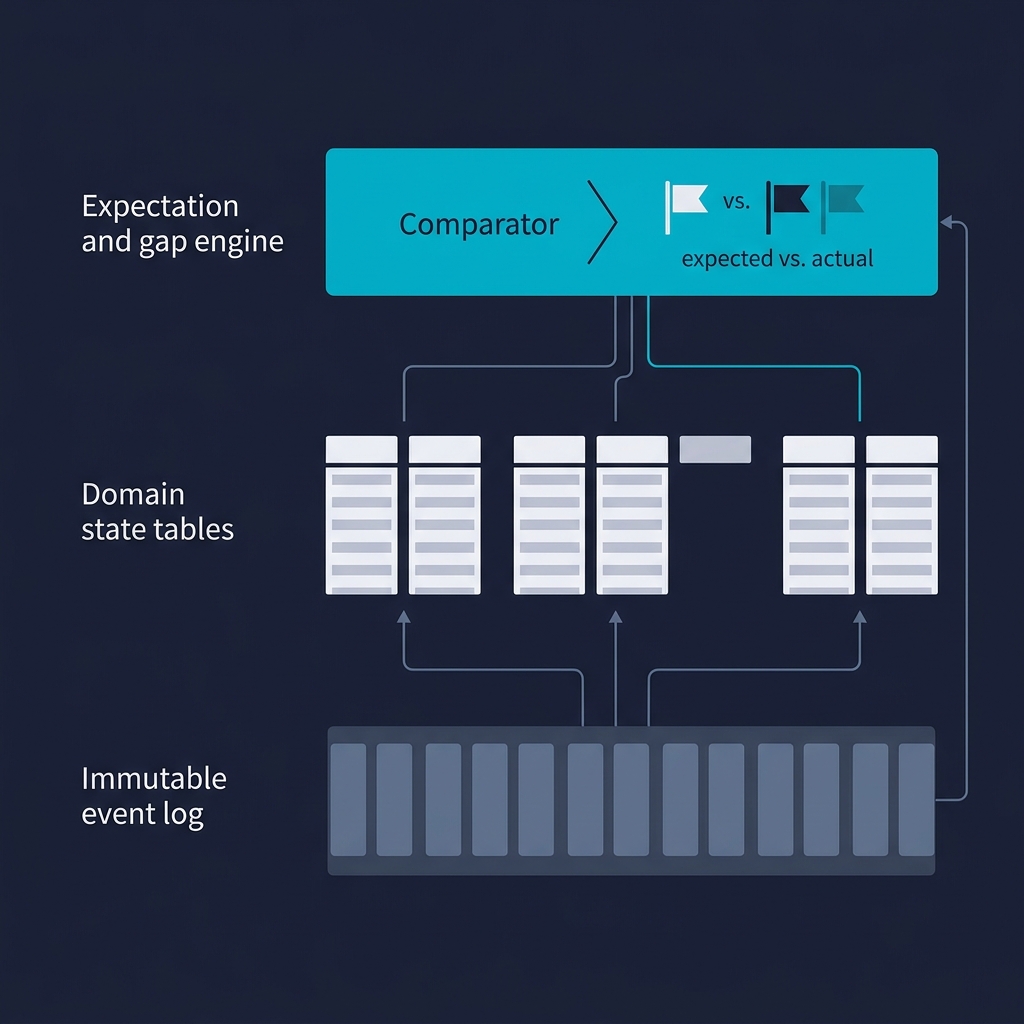

When Dimension 4 is set to "monitored flag," the framework still tracks the dimension carefully. Scores are still produced, evidence is still gathered, trends are still visible over time. The difference from "hard gate" isn't that the dimension stops being measured; it's that the measurement doesn't drive classification. Over time, the tracked scores tell a story. They might show that distributor commitment is improving as the tenant's market position strengthens, or they might show that commitment is deteriorating even as the business grows. Both trajectories are useful to see, and the "monitored flag" configuration is what makes them visible.

For a challenger brand using this configuration, the Dimension 4 trajectory is a leading indicator of whether the strategic position is strengthening enough to start shifting to "weighted" or eventually "hard gate." You don't make that shift based on a single year's scores; you make it based on consistent improvement across multiple years. The tracked data is what makes that multi-year judgment possible.

Without the tracking, the shift from challenger norms to market-leader norms has no evidentiary basis. It happens - or doesn't - based on feelings about the market position, which tend to lag reality in both directions. With tracking, the shift can be data-informed. That's the value of continuous measurement even in configurations that don't use the measurements for immediate classification.

The framework, configured correctly, is an instrument for strategic self-awareness over time. Classification is a byproduct. Most organizations have it backwards.